Seedance 2 is a Big Step Forward for AI Anime

ByteDance's new video model changes what's possible for anime creators

Seedance 2.0 Demo pic.twitter.com/example

— Esoteric Cofe (@EsotericCofe) February 2026

ByteDance just released Seedance 2, and it might be the biggest single leap we've seen in AI video generation for anime. Previous models could produce impressive clips, but they struggled with the things anime creators actually need: consistent characters, controlled camera work, and audio that doesn't feel bolted on. Seedance 2 addresses all of these.

Why Previous AI Video Models Fell Short for Anime

AI video generation has improved rapidly, but anime has always been a uniquely demanding use case. Traditional anime production relies on extreme consistency: a character's face, hair, and outfit must look identical across hundreds of shots. Camera angles follow deliberate cinematic grammar. Voice acting and sound design are tightly synchronized.

Most AI video models treat each generation as an isolated event. You type a prompt, you get a clip. But if you generate two clips of the same character, they might have different eye colors, different hair lengths, or a completely different face. That's a dealbreaker for any kind of storytelling.

Motion quality has been another pain point. AI-generated anime characters tend to move in floaty, unnatural ways, with limbs that stretch and distort mid-clip. For a medium where motion is everything, this matters.

What Seedance 2 Brings to the Table

Seedance 2 is built on a Dual-Branch Diffusion Transformer architecture that generates video and audio in a single forward pass. That's a meaningful technical distinction: instead of generating video first and then trying to match audio to it, both are produced together, resulting in much tighter synchronization.

Here's what stands out for anime use cases:

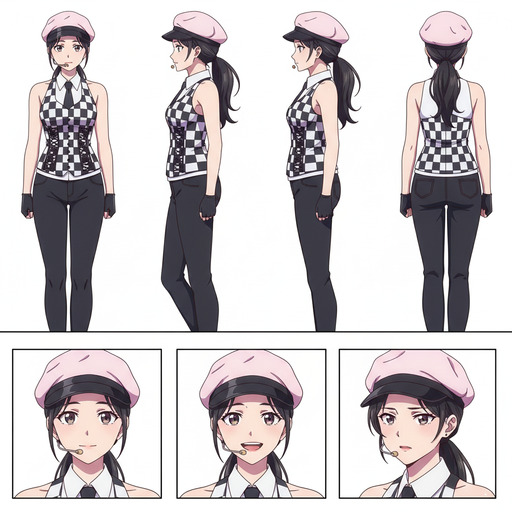

Character Consistency Across Shots

Seedance 2 accepts up to 12 reference files, including 9 images, 3 videos, and 3 audio files, as input alongside text prompts. For anime creators, this means you can feed in character reference sheets, specific poses, and style guides to maintain visual identity across multiple generations. The model maintains facial features, clothing details, and proportions in a way that previous models simply couldn't.

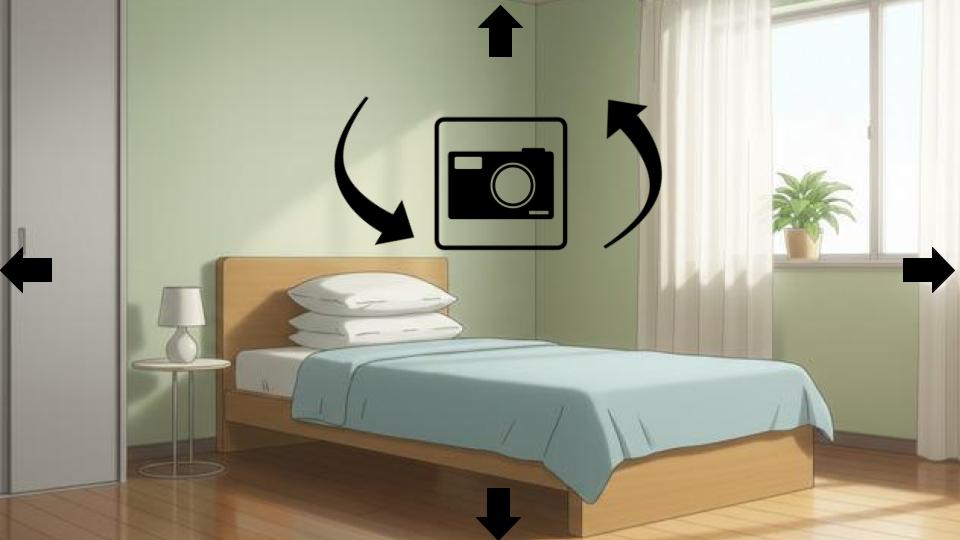

Cinematic Camera Control

Anime relies heavily on camera language: dramatic zoom-ins, slow pans across environments, tracking shots during action sequences. Seedance 2 introduces an @mention syntax that gives creators precise control over camera movements and transitions. You can specify complex multi-shot sequences with automatic camera transitions, something that was essentially impossible with earlier models.

Native Lip-Sync and Audio

One of Seedance 2's headline features is phoneme-level lip-sync in 8+ languages. For anime, where dialogue-driven scenes are central, this is transformative. Previous workflows required generating video, then manually syncing audio, often with poor results. Seedance 2 produces audio-driven facial muscle movements that approach professional motion-capture quality.

Up to 2K Resolution

The model outputs up to 1080p natively with 2K upscaling, which matches the resolution of modern anime productions. Earlier AI video models often topped out at lower resolutions or introduced visible artifacts when upscaled.

What This Means for Anime Creators

The gap between "AI-generated video clip" and "usable anime footage" has been closing steadily, but Seedance 2 narrows it significantly. Character consistency means you can actually build narratives. Camera control means you can compose shots with intention. Audio sync means dialogue scenes don't require painful post-production workarounds.

None of this means Seedance 2 replaces traditional anime production. It doesn't. But for indie creators, hobbyists, and small studios experimenting with AI-assisted workflows, it opens up possibilities that were unrealistic just months ago.

The model also excels at style transfer. Feed it anime reference images and it can generate video that matches the aesthetic convincingly, whether you're going for a Ghibli-style warmth, a cyberpunk edge, or a clean slice-of-life look.

How It Compares

Seedance 2 isn't the only AI video model available, but it currently leads in controllability and consistency, two things that matter most for anime production. Models like Sora 2 may produce more photorealistic physical simulations, but when it comes to stylized content with persistent characters, Seedance 2 has the edge.

The 30% speed improvement over its predecessor also matters practically. Faster generation means more iterations, which means better results.

What's Next

We're actively working on integrating Seedance 2 into AutoWeeb. Our goal is to combine Seedance 2's raw video generation capabilities with AutoWeeb's anime-specific tools: character sheets, scene builders, and style systems that are purpose-built for anime workflows.

If you're interested in AI anime video, this is the most exciting development in a while. Stay tuned.

Try AutoWeeb Now