7 AI Anime Video Prompt Mistakes That Ruin Your Output

Most failed generations aren't a model problem — they're a prompt problem. Here's what's going wrong and how to fix it.

Bad AI anime video output almost always traces back to a bad prompt. Not a model failure, not a platform limitation. A prompt that left too much unspecified, contradicted itself, or asked a single four-second clip to do the work of five. The model filled in the blanks with statistical guesses, and the guesses didn't match what you had in mind.

Every mistake below is fixable. Each one has a clear cause, a clear symptom in the output, and a specific fix you can apply to your next generation. If your clips have been coming out flat, inconsistent, or just wrong, one of these seven is almost certainly why.

Mistake 1: Vague prompts that leave everything up to the model.

The most common prompt mistake is describing the category of a scene instead of the scene itself. "A cool anime fight scene" is a category. "An intense sword fight on a crumbling stone bridge at sunset, two rivals circling each other before one lunges" is a scene. The first prompt asks the model to guess what "cool" means visually, which character is fighting, where, in what conditions, and in what style. The model will produce something, but it will be its best statistical average of "anime fight," not your specific vision.

Every noun in your prompt should be specific enough to answer the question: compared to what? Not "dark forest" — pine forest at midnight, knee-level mist, single shaft of moonlight cutting through the canopy. Not "running away" — sprinting down a rain-soaked alley, coat flying, one hand braced against a wall as she turns the corner. Vagueness in the prompt becomes vagueness in the output, every time.

The fix: Before submitting any prompt, read it back and replace every generic descriptor with a specific one. If a word could describe a hundred different scenes, it is doing no work.

Mistake 2: Inconsistent character descriptions across clips.

Your character has short brown hair with gold eyes and a black hoodie in clip one. In clip three, the model gives them long auburn hair and a school uniform because you shortened the description for convenience. Now you have two different characters in the same video, and no amount of editing will reconcile them in post.

This happens because AI video models have no memory between generations. Every clip is a fresh generation. The model doesn't know what your character looked like in the previous clip. If you don't include the same character anchor in every prompt, appearance drift is inevitable, and it compounds with every clip you generate.

The fix: Write your character description once, save it, and paste it verbatim into every clip prompt. Hair color and length, eye color, and the most visible clothing item are the minimum. For close-up shots, add facial features. For full-body shots, add outfit details below the waist. If you're using AutoWeeb, save the character to your character library — the system locks visual consistency across every generation without requiring you to repeat the description manually.

Mistake 3: Poor motion direction that produces static or generic movement.

Specifying what a character does is not the same as specifying how they move. "She raises her sword" tells the model the action. It does not tell the model that she raises it slowly, with both hands trembling, energy crackling along the blade as she holds the peak position. Anime has a specific motion vocabulary, and using it changes the output dramatically.

Generic motion direction produces generic anime movement, the kind that looks like a default loop rather than a specific story beat. Anime-native motion language, held tension before a strike, a slow build followed by a sudden snap of speed, a single speed-line cut across the frame at the moment of impact, signals to the model what kind of scene this is and how the motion should be phrased within the clip.

The fix: Describe not just what the character does but the timing, weight, and rhythm of the motion. Useful phrases: builds slowly then releases in a single sharp motion, held at peak tension for two beats before the strike, dramatic speed lines on the forward lunge, slow motion at the moment of impact, then full speed on the landing. One specific motion note per clip is enough to shift the output from generic to intentional.

Mistake 4: Missing style references that let the model pick its own defaults.

Without a named art style, most AI video models default to a generalized "anime aesthetic." That default is not wrong exactly, but it commits to no particular visual language: no specific line weight, no color palette logic, no shading approach, no character proportion convention. The output looks like anime in the same way that a beige wall looks like a color. Technically accurate, entirely forgettable.

Named styles anchor the model to a specific set of production conventions. Demon Slayer art style triggers high contrast, bold ink outlines, and intense color saturation. Ghibli naturalism triggers soft edges, muted earthy palettes, and attentive environmental detail. Cyberpunk anime aesthetic triggers neon ambient glow, hard shadow geometry, and blue-shifted color temperature. Each of these shapes not just how the clip looks but how it moves and feels.

The fix: Name one art style per prompt and commit to it. If you want qualities from multiple styles, name the specific visual properties rather than the style names: bold ink outlines with soft pastel fill and Ghibli-style environmental texture. AutoWeeb supports over a dozen named styles that can be selected before generation or called directly in the prompt.

Mistake 5: Camera confusion from no direction, or too many directions.

Two versions of this mistake exist, and both produce weak output. The first is including no camera direction at all, which pushes the model toward a static medium shot with minimal movement. That framing isn't inherently bad, but it means you're handing off one of the most emotionally powerful variables in the clip to the model's defaults. The second version is including two or three competing camera instructions in one prompt. "Slow push-in while tracking from behind and tilting up" asks the model to do three different things simultaneously. It will guess which one you meant, and the guess will be inconsistent.

Camera direction is the single highest-leverage addition a beginner can make to an otherwise complete prompt. A slow push-in toward a character's face builds intimacy and tension. A low-angle upward shot makes the same character feel powerful. A wide static shot communicates scale and isolation. Same character, same action, completely different emotional register.

The fix: Pick one camera direction per clip and state it clearly. Useful options: slow push-in toward her face, low angle looking up, tracking shot from behind, static wide shot, close-up on hands, Dutch angle from the left, crane pull-back to reveal the full scene. One clear direction per clip, applied consistently, will noticeably elevate your output.

Mistake 6: Ignoring lighting and letting the model set the mood.

Lighting defines the emotional register of a scene more than almost any other element, and it is one of the most skipped layers in beginner prompts. Without a lighting instruction, the model picks a neutral ambient light that keeps the scene technically visible but emotionally inert. That's fine for a reference sheet. It's wrong for a dramatic confrontation, a quiet confession, or a battle that should feel desperate.

Anime uses lighting in ways that are specific and recognizable: the warm golden-hour backlight of a peaceful slice-of-life moment, the cold hard blue of a tense rooftop standoff, the flickering orange of a campfire conversation, the flat fluorescent white of an interrogation, the deep violet of a scene that is about to go very wrong. When you name the lighting, you're not just adjusting brightness. You're telling the model what kind of scene this is emotionally.

The fix: Pair a light source with a color temperature in every prompt. Examples: cold moonlight, steel blue ambient glow, warm amber lantern light casting soft shadows upward, harsh overhead fluorescent, no warm tones, golden hour backlight, silhouette forming at the edges, flickering firelight, orange and deep shadow alternating. Two descriptors, ten words, and the mood of the entire clip shifts.

Mistake 7: Overloading one clip with too many events.

An AI anime video clip is typically four to eight seconds long. Trying to pack a character entrance, a confrontation, an emotional reaction, and a dramatic exit into a single prompt is asking the model to compress a two-minute scene into eight seconds. What you get is a rushed, incoherent sequence where every beat exists but none of them land. The model cannot slow down time within a clip. It will execute all events in sequence, which means each one gets a fraction of a second.

The mistake usually comes from thinking about scenes rather than shots. A scene has multiple beats. A shot has one. Prompts are shot-level instructions, not scene-level outlines. When you plan at the shot level, every clip has a single primary action or emotional beat that it can execute completely, and the sequence of clips builds the scene.

The fix: Before writing a prompt, ask: what is the single most important thing happening in this specific moment? Write the prompt around that one thing. If you find yourself connecting two events with "and then," you have two clips. Plan them separately. Three clean shots in sequence will always outperform one overloaded prompt, and the final result will be more cinematic for it.

Applying all seven fixes at once.

These mistakes tend to travel together. A vague prompt usually also lacks camera direction, skips lighting, and ignores style. Fixing one while leaving the others produces incremental improvement. Fixing all seven at once produces a dramatically different generation quality.

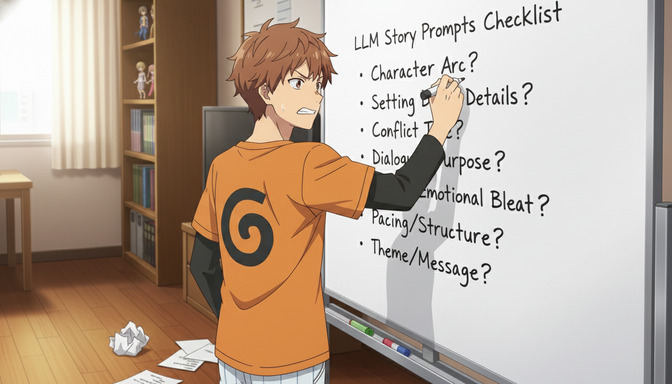

A practical checklist before submitting any prompt:

- Is every noun specific enough that it could only describe one thing?

- Is the character description identical to every other clip featuring this character?

- Does the prompt describe how the character moves, not just what they do?

- Is a named art style included?

- Is there exactly one camera direction?

- Is there a light source with a color temperature?

- Does the prompt describe one primary beat, not a sequence of events?

If all seven answers are yes, submit the prompt. If any of them is no, the fix is usually one sentence. The model rewards specificity directly and immediately.

Frequently asked questions about AI anime video prompt mistakes.

Why does my anime video character look different in every clip?

AI video models have no memory between generations. Each clip is generated independently, so if your character description changes or shortens between prompts, the model fills the gaps differently each time. The fix is to paste the same character description verbatim into every clip prompt, or to use AutoWeeb's character library, which anchors visual consistency automatically without repeated text.

How specific does an AI anime video prompt need to be?

Specific enough that every noun can only describe one thing. If a descriptor could apply to a hundred different scenes, it is not doing useful work. A practical test: read each noun back and ask "compared to what?" If you can't answer that question precisely, replace the descriptor with one that you can.

Do I really need to include camera direction in every prompt?

Yes. Without camera direction, the model defaults to a neutral medium shot with minimal movement. That default is fine as a starting point and wrong as a deliberate choice. Camera direction is one of the highest-leverage additions you can make to an otherwise complete prompt. Pick one direction, state it clearly, and the emotional impact of the clip changes immediately.

What happens if I name two art styles in one prompt?

The model will attempt to blend them, and the result is usually a compromise that achieves neither style cleanly. If you want specific qualities from two styles, name the visual properties instead of the style names: bold ink outlines with Ghibli-style soft color fill is more actionable than Demon Slayer and Ghibli style. One named style per prompt is the cleaner approach.

Why does my prompt keep generating output that doesn't match the mood I wanted?

Missing lighting is almost always the cause. Mood in anime is carried by lighting conditions more than almost any other element. Without a named light source and color temperature, the model picks neutral ambient light, which reads as emotionally flat. Add a lighting line to any prompt that has a specific emotional target: cold blue moonlight for tension, warm amber lantern scatter for intimacy, harsh overhead white for dread.

How many events can I fit into one AI anime video clip?

One. A single primary action or emotional beat per clip. A clip is four to eight seconds of real output time. If you need a character to enter, confront someone, and react, that is three clips. Plan them as separate prompts in a storyboard sequence. The shots will be cleaner and the assembled scene will be more cinematic than any single overloaded prompt could produce.

What is the fastest way to improve my AI anime video prompts?

Add one camera direction line to every prompt you write, right now, before changing anything else. It is the single highest-return habit change for prompting. Once that becomes automatic, work through the remaining six layers: character anchor, motion specificity, art style, lighting condition, scene detail, and single-beat focus. Each layer you add consistently improves output quality noticeably.

Does AutoWeeb handle any of this automatically?

Yes. AutoWeeb's video agent builds structured prompts from plain-English scene descriptions, applying camera direction, style anchoring, motion language, and lighting conditions based on the scene type. The character library handles consistency automatically across clips. For users who want to learn manual prompting, the agent output is a useful reference for what a complete prompt looks like at each layer. For users who want to generate without learning the structure first, the agent handles it entirely.

For a complete breakdown of how to build strong prompts from scratch, the guide to writing the best AI anime video prompts from beginner to pro covers all six structural layers with templates and advanced examples. If you want to start with a character built from your own photo before prompting a single video, the step-by-step guide to turning yourself into an anime video walks through the full workflow from selfie upload to finished clip.